Gartner has named agentic AI the top technology trend for 2025, and manufacturing is among the first sectors feeling the structural weight of that designation. Autonomous AI agents - systems that plan, reason, and execute multi-step actions across interconnected production environments - are moving well past semiconductor fabs into automotive plants, chemical facilities, packaging lines, and energy operations. The central question for operations and digital transformation leaders is no longer whether to deploy agentic AI. It is how to do so without trading operational resilience for uncontrolled autonomy.

Agentic AI systems act autonomously to achieve specific goals, moving beyond preprogrammed instructions and transforming smart manufacturing from a data-rich to a decision-rich environment. That shift creates both the opportunity and the obligation to build governance architecture never required of conventional automation.

What Agentic AI Actually Does on the Shop Floor

Traditional automation is deterministic: a PLC executes a fixed rule, and the outcome is predictable. Predictive analytics introduced intelligence but not autonomy - a predictive maintenance model might forecast bearing failure in 72 hours, but the insight sits in a dashboard waiting for an overloaded maintenance planner to notice, evaluate, and act.

Agentic AI closes that gap. Where predictive AI tells operators a bearing will fail in 22 days, agentic AI drafts the repair plan, checks parts inventory, schedules the technician, and coordinates the work order - all without human intervention. On quality lines, instead of merely flagging a defect, a system can adjust machine settings, trigger quality checks, and trace root causes across upstream processes.

The cross-sector relevance is significant. In energy-intensive industries like steel or chemicals, agentic systems can dynamically manage consumption by aligning production activities with pricing and environmental goals - shifting certain processes to off-peak hours when energy costs are lower. Supply chain agents can automatically assess logistics disruptions across all plants, shift orders to facilities with sufficient inventory, and reroute finished goods to maintain customer delivery commitments, all orchestrated without waiting for a morning planning meeting.

The performance data supports the investment case. A peer-reviewed study1peer-reviewed study on agentic predictive maintenance in ceramic tile manufacturing reported 94% predictive accuracy, a 67% reduction in false positives, and a 43% decrease in unplanned downtime, with a payback period of 1.6 years and a net present value of €447,300 over five years. Adoption projections reflect this momentum: Deloitte predicts a fourfold increase in agentic AI adoption in manufacturing by 2026, from 6% to 24%.

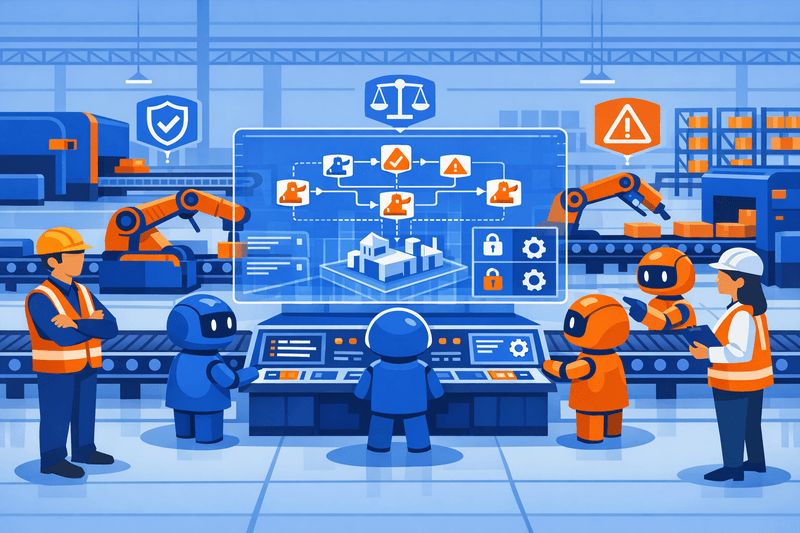

Governance Architecture: Defining Accountability When Agents Act

The core governance challenge is not technical - it is organizational. The question shifts from "Is the model accurate?" to "Who is accountable when the system acts?" Governance must define scope, inventory, and ownership, and make each auditable.

Many approaches to agentic AI governance rely on reactive oversight, applying controls after systems are deployed rather than embedding them into system design. Reliable governance requires clear definitions of behavior, success and failure conditions, and enforceable, pre-defined limits built into the system from the start to minimize silent and recurring failures.

For manufacturing operators, this translates into a tiered autonomy model. Low-stakes actions - reordering consumables, logging sensor anomalies - can run fully autonomously. High-consequence decisions - initiating equipment shutdowns, releasing non-conforming product batches, modifying safety interlocks - require explicit human approval with documented escalation paths. Strict industrial safety requirements constrain the degree of autonomous operation permitted, necessitating human oversight for safety-critical decisions; in one studied deployment, this conservative approach resulted in an 8% escalation rate to human operators for conflict resolution.

This connects directly to the broader data governance challenges already present in MES deployments: without clean, semantically consistent data feeding agents, autonomous decisions rest on unreliable inputs. Governance of AI agents must extend upstream to the data infrastructure that informs them.

The table below maps principal agentic AI use cases across sectors to their key governance requirements:

| Use Case | Sectors | Agent Action | Governance Priority |

|---|---|---|---|

| Predictive Maintenance | Automotive, Energy, Discrete Mfg. | Orders parts, schedules downtime, dispatches technicians | Audit trail for work orders; human override for safety-critical shutdowns |

| Autonomous Scheduling | Consumer Electronics, Pharma | Re-sequences jobs, adjusts recipes, shifts maintenance windows | Change logs; conflict escalation to human planners |

| Anomaly Detection | Chemicals, Semiconductors, Food & Bev. | Detects sensor deviations, triggers containment protocols | Explainability layer; defined false-positive thresholds |

| Quality Control | Automotive, Packaging, Aerospace | Halts line, redirects batch, traces root cause upstream | Regulatory traceability (ISO, FDA); human sign-off on batch disposition |

| Energy Optimization | Steel, Chemicals, Process Industries | Shifts production to off-peak windows, balances load | Grid-compliance checks; emissions reporting accuracy |

| Supply Chain Orchestration | Cross-industry | Reassigns suppliers, reroutes goods, updates delivery timelines | Contract authorization limits; supplier SLA guardrails |

Safety, Reliability, and Explainability

For agentic AI to scale safely across the enterprise, observability, security, governance, and controls - providing traceability, accountability, anomaly detection, and cost discipline - must be built in from the start, not bolted on later.

In industrial operations, this is non-negotiable. Industrial agentic AI systems operate above the real-time control layer; their purpose is to orchestrate decisions across operations without interfering with deterministic safety systems. An agent that misinterprets a sensor reading and triggers an unnecessary shutdown in a chemical plant is not merely an IT problem - it is a safety and financial event.

Frameworks that embed explainable AI and trust calibration mechanisms ensure transparency and safe human-machine collaboration. Practically, this means every autonomous action must be logged with its data inputs, decision logic, and confidence score. Operators and auditors must be able to reconstruct the chain of reasoning behind a given output - a requirement that shapes platform selection and architecture design from day one.

Reliability standards demand redundant communication channels and failsafe modes; one studied system achieved a 99.8% message delivery success rate, approaching but not fully meeting the 99.99% threshold often required for safety-critical environments. That gap matters in process industries where missed messages can cascade.

OT Cybersecurity: Agents as a New Attack Surface

Agentic AI introduces a qualitatively different threat profile to operational technology environments. An autonomous agent functions as a tireless digital worker, but it is also a potent insider threat - always on, never sleeping, and if improperly configured, capable of accessing privileged APIs, data, and systems with implicit trust.

Principles that underpin regulatory identity and access management - least privilege, segregation of duties, and auditable access reviews - provide a foundational framework extendable to autonomous AI agents. Legacy IAM protocols, however, were designed for a more deterministic era: they presume predictable application behavior and a single authenticated principal. Agentic AI violates those assumptions through coarse-grained, static permissions too inflexible to handle dynamic agent behavior.

For OT/IT environments already navigating converged architectures - a topic covered in depth in the analysis of MES-powered OT/IT convergence at Hannover Messe 2026 - the orchestration layer connecting AI agents with SCADA systems, PLCs, and MES platforms becomes a high-value target. Cybersecurity is now the single greatest barrier to achieving AI strategy goals, cited by 80% of enterprise leaders, and half of executives plan to allocate $10-50 million to secure agentic architectures and harden model governance.

Model update pipelines deserve particular attention. A compromised model update pushed to a maintenance scheduling agent across a multi-plant network constitutes a supply chain attack with physical-world consequences. Zero-trust segmentation, time-bound credentials, and cryptographic signing of model artifacts are baseline controls, not optional enhancements.

Workforce Transitions and Ethical Deployment

The biggest risk in agentic AI deployment is not technical - it is cultural. If workers perceive AI as a surveillance tool rather than a co-pilot, adoption stalls entirely. Manufacturing enterprises that reframe agentic AI as workforce augmentation - rather than headcount displacement - consistently achieve better adoption outcomes.

Personnel need training in kill switches and prompt engineering, and the human-in-the-loop role demands a different skill set: the rigor to govern autonomous systems rather than simply execute tasks. This is a structural upskilling challenge, not a one-time training exercise.

The differentiator is no longer basic adoption - it is effective human-agent teaming, grounded in ethical practices and measurable outcomes. Entry-level hiring is already being reimagined: 64% of organizations have altered their hiring approach due to the influence of AI agents, reflecting demand for new competencies in data, automation, and responsible AI.

For industrial operators, ethical deployment also extends to labor transparency - communicating clearly with workforces about which tasks agents will handle, what decisions remain in human hands, and how performance metrics will (and will not) be applied to individuals working alongside AI systems.

Regulatory and Standards Landscape

Standards bodies are beginning to codify what manufacturers will soon be required to demonstrate. NIST's Center for AI Standards and Innovation announced the "AI Agent Standards Initiative" to foster industry-led technical standards and protocols that build public trust in AI agents and catalyze an interoperable agent ecosystem.

The EU AI Act, which entered into force in 2024, sets maximum penalties of €35 million or 7% of global annual turnover for certain violations - an incentive to embed oversight, logging, and evaluations into agent pipelines from day one. In Europe, the Cyber Resilience Act will apply starting in 2027, while the Digital Operational Resilience Act has been in force since January 2025, establishing mandatory technical controls and governance requirements.

Insurers are also beginning to price agentic AI risk. Operators who cannot demonstrate audit trails, containment strategies, and incident response playbooks for autonomous systems will face increasing scrutiny at renewal.

Gartner predicts that over 40% of agentic AI projects will be cancelled by the end of 2027 due to escalating costs, unclear business value, or inadequate risk controls. The operators who avoid that outcome will be those who treated governance as a design requirement - not an afterthought.

A Six-Step Governance Readiness Framework

Manufacturers preparing to scale agentic AI deployments beyond isolated pilots should evaluate readiness across six operational dimensions:

Agent Inventory & Identity Management - Maintain a live registry of all deployed agents: identity, permissions, credential provenance, and expiry. Without a comprehensive plan for managing agents - tracking who they are, what they can access, and when their permissions expire - organizations risk disaster through complexity and compromise.

Autonomy Tier Classification & Escalation Paths - Classify every agent action by consequence level. Safety-critical decisions require human approval; safety-critical operations require deterministic governance, not probabilistic recommendations for equipment shutdowns.

Explainability & Audit Trail Infrastructure - Require agents to log data inputs, decision logic, and action outputs. Traceability is both an operational safeguard and an emerging regulatory obligation.

OT Cybersecurity Controls - Apply least-privilege access, zero-trust segmentation, and cryptographic model verification across every agent-to-OT interface. If an agent behaves unexpectedly, the blast radius is defined by the boundaries in place - not by the permissions left unrestricted.

Workforce Upskilling & Change Management - Identify roles being augmented before deployment. Build training programs around AI oversight, escalation protocols, and data validation - and communicate the deployment rationale transparently to affected teams.

Regulatory & Standards Alignment - Track NIST's AI Agent Standards Initiative, ISO/IEC 42001, IEC 62443 for OT security, and EU AI Act obligations. Conduct pre-deployment governance reviews with legal, cybersecurity, and compliance stakeholders.

Frequently Asked Questions

Q: How does agentic AI differ from conventional rule-based automation or traditional predictive analytics? Rule-based automation executes fixed logic without reasoning about context. Predictive analytics surfaces insights but relies on human action. Agentic AI combines autonomous reasoning, decision-making, and execution - it acts on the insight rather than waiting for a human to do so.

Q: What are the highest-priority use cases for initial agentic AI deployment in manufacturing? Predictive maintenance and autonomous scheduling typically offer the fastest time-to-value with contained risk profiles. Quality control and supply chain orchestration deliver higher impact but require more mature data infrastructure and governance controls before autonomous action is appropriate.

Q: How should manufacturers handle legacy OT systems that lack native connectivity for AI agents? Edge gateways using industrial protocols (OPC-UA, MQTT) can normalize data from legacy PLCs and equipment without direct integration. Agents interact with the gateway layer rather than the control system itself, preserving deterministic safety behavior at the OT level.

Q: What is the most common failure mode in early agentic AI deployments? Gartner research indicates that many agentic AI projects fail due to escalating costs, unclear business value, or inadequate risk controls. Governance designed post-deployment - rather than built into the architecture from the start - is the most consistent structural cause of these failures.

Q: Are there sector-specific regulatory requirements operators should anticipate? Pharmaceutical manufacturers face FDA 21 CFR Part 11 obligations for electronic records and signatures that extend to autonomous agent outputs. Automotive suppliers under IATF 16949 must maintain process traceability that AI-generated records must satisfy. Energy operators face IEC 62351 requirements for power system security that apply to any system interfacing with grid control infrastructure.